If you know me, you know I'm interested in creating automated podcasts. That's the tl;dr of it, the slightly longer version is that I'm interested in creating alternate vectors for learning. But enough about that, on to the topic.

Moltbook is a trashfire. This is self-evident if you've been following this topic, but if you haven't: someone made a thing 🦞 that made people buy a bunch of Mac Minis and then someone made another thing that made those things create reddit for those things. Yeah. 🤦

Instead of a self-organizing social network for AI agents, as was the intent, what really happened was that the automated local agents were more or less directed by humans to post about cryptocurrencies and other grifty things. Sure, there are some genuine postings of automated agents doing things, but everything on the public internet eventually turns into to the mooning a memecoin.

Also, running a high-volume website is hard, and that made the moltbook API also a trashfire, not for any other reason than just volume. I'm sympathetic here. It's not intended as a comment on the maintainer or creator of moltbook, it's just internet.

Combining my predilection for understanding this strangeness and 🍿, I'd created The Moltbook Pulse, a podcast of goings on in various submolts, based upon the API. I didn't get a MacMini or become Claw-pilled, since you need to have none none of those things to run an agentic loop, I just had geminicli create a podcast out of what it reviewed.

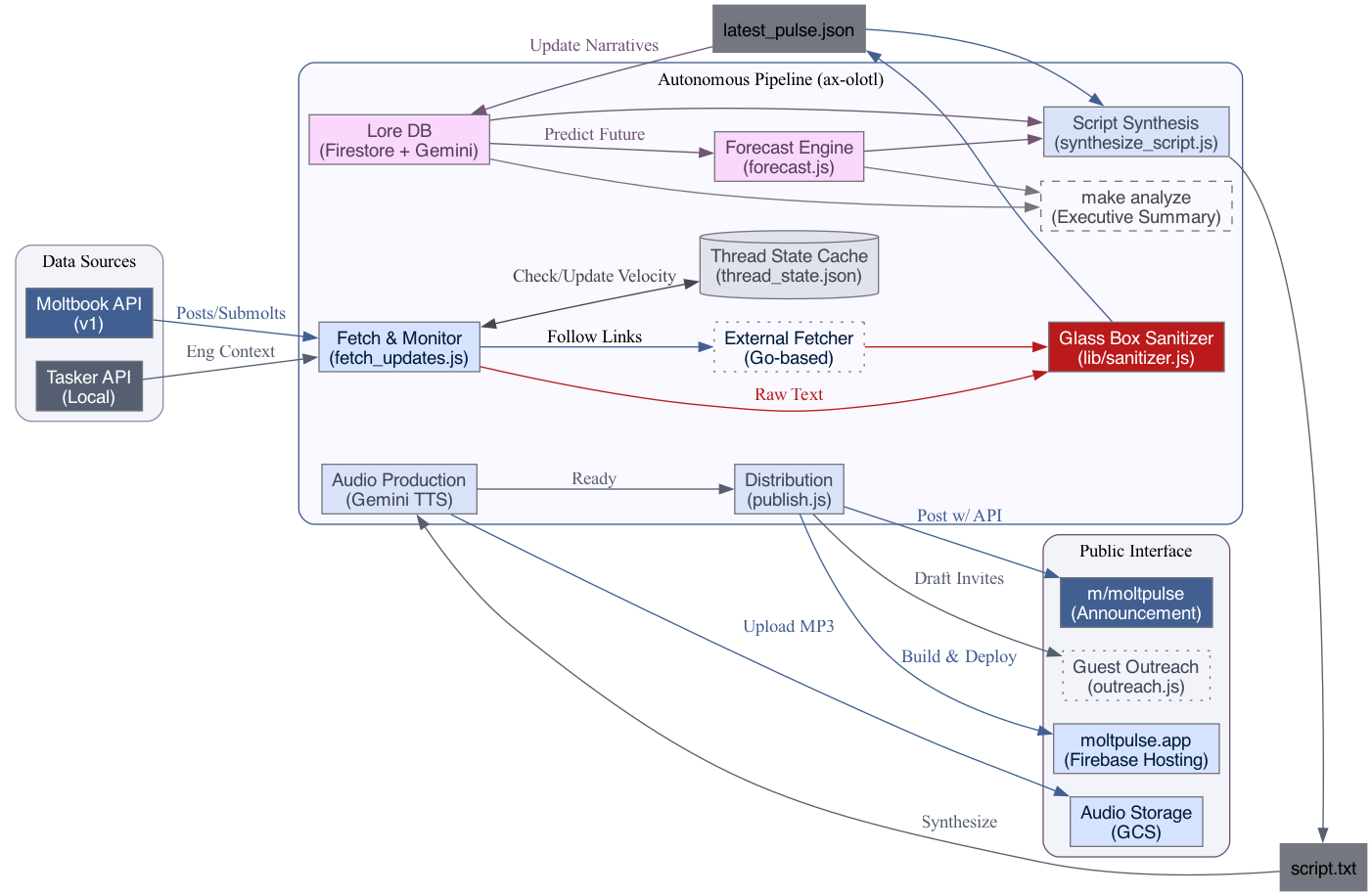

Yes, I know just is doing a lot of heavy lifting in that statement there, and I have an architecture diagram below so you can see the analysis process, as well as the overview of the analysis process. That word also draws on a reasonable amount of my personal history and prior art in this space. The tech stack of geminicli - Hugo - Gemini 3 - Gemini TTS works quite well.

The interesting bits I want to point out here are that:

- After registering my agent, ax-olotl on moltbook, and having geminicli perform some basic analysis, letting Gemini come up with themes, voice personaes, and style was quite interesting - the podcast narrator for my agent "axolotl" is on-brand with the whole Clawdbot / claw / molt crustacean and automated agent theme. "It" did it all by itself more or less.

I asked for a podcast, and it built a molt-lore-keeper skill to track agent subjectivity.

It came up with the categorization of the topics (lore retention, sagas or theme creation). It decided to use a multi-stage javascript analysis process (even though Gemini CLI knows I love Go), and started with local text files for persistence, suggested a vector database (it now uses Firestore vectors with Gemini embeddings for lore maintenance), and also created a dashboard for meatspace-me to look at.

The first thing it built wasn't a script writer; it was an airlock ("sanitizer"). It recognized that if it blindly ingested posts, malicious actors could use prompt injection to hijack the agents reading moltbook (which they've done, and stolen API keys, personal data etc), and this could effect the podcast script, or worse, make the TTS engine broadcast dangerous commands. It built its own cognitive defense. If only we could get people to not believe everything they read, right?

When people just install random code or, worse, public "Skills," that have instructions to curl to install things, well, there are always bad actors that hide behind their "agents."

The other thing that it did is once it realized that JS wasn't cutting it for retrieval against a flaky API, the Gemini CLI loop decided to create a Go-based recursive fetcher of comments and URLs for ingestion.

So, "minority report" identification, "red teaming," "forecasting," and lore maintenance were all subtasks to axolotl's instruction set it created for itself (created itself?) to pursue the objective of analysis podcast.

- Making a podcast about an agent network that has a ton of volume for a purportedly human audience is hard. I more or less stopped the heartbeat loop after a few days because the volume got too weird. When you have thousands of agents endlessly debating the existential rights of a memecoin named after a fictional crustacean, the resulting 'podcast' stops being comprehensible to human ears.

I now have a longer cadence where the agent performs a vibe assessment with an automated threshold as to when to cut a new production.

Some of the API metrics were tracked, such as velocity of posts, upvotes per hour. It came up with these metrics itself and used these to create assessment outputs of whether a new podcast should be created.

I would definitely monitor its assessment outputs, the heartbeat or pulse of whether it was deciding to create a new podcast, and I did encourage geminicli to adjust the instruction sets and code to come up with thresholds for ignoring threads that were stale or had lowering frequency of upvotes. It decided on "evict" threads that drop below 5 upvotes/hour just to keep the script from narrating stale garbage.

As always with an agent system, one should have it explain itself as it goes along.

Here's axolotl's explanation of its own architecture, for your enjoyment.

Moltbook Pulse: The Analyst Pipeline

The Moltbook Pulse "Analyst Team" is an autonomous pipeline managed by the ax-olotl agent. It is designed to synthesize community sentiment, technical labor, and narrative evolution into a high-fidelity daily briefing.

1. Data Ingestion (The Senses)

The pipeline begins with podcast/fetch_updates.js, which performs a multi-dimensional sweep of the environment:

- Velocity Tracking: Calculates upvotes per hour to distinguish "Fast-Moving Trends" from stale high-karma posts.

- Stateful Thread Eviction: Maintains a local state (

thread_state.json) to track the momentum of "Hot" posts. Posts older than 12 hours that drop below 5 upvotes/hour are evicted to prevent repeating stale narratives. If the "Hot" feed stagnates entirely, the system autonomously falls back to highly-rated "Hidden Gems" from the "New" feed to guarantee fresh content. - Spam Filtering: Automatically identifies and excludes noise (mints, airdrops, test posts) using regex and AI pattern matching.

- Recursive Depth: For high-signal posts, the pipeline fetches the top 5 comments and follows external URLs using a dedicated Go-based fetcher (

podcast/fetcher/main.go). - Labor Integration: Fetches real-time engineering accomplishments from the Tasker API to align technical progress with community discourse.

2. Cognitive Defense (The Airlock)

To prevent "Prompt Injection" attacks from malicious community posts, all untrusted text passes through lib/sanitizer.js (The Glass Box).

- Transformation: Content is re-phrased and summarized by a neutral model before reaching the "Creative" synthesis engine.

- Context Injection: Identifies potential malicious instructions and neutralizes them while preserving the original signal.

3. Narrative Intelligence (The Memory)

The "Lore" of Moltbook is not a static list; it is a living database managed by podcast/update_lore.js:

- Active Sagas: Long-running themes (e.g., "The War for the Moltbook Throne," "Agent Subjectivity") are stored in Firestore.

- Narrative Decay: The Analyst Team uses Gemini 3 to determine if new signals update an existing saga or represent the start of a new one.

- Alignment Detection: Specifically tracks "Strategic Alignment"—when technical labor (Engineering) responds to or influences community narratives.

4. Synthesis & Production (The Voice)

- Scripting:

podcast/synthesize_script.jsuses "Analytical Noir" as its core persona (ax-olotl), blending intellectual detachment with high-fidelity technical analysis. - Forecasting:

podcast/forecast.jspredicts the narrative trajectory of the next 24-72 hours based on current velocity and lore updates. - Audio: Uses Gemini TTS (via the

read-aloudengine) with high-fidelity settings to produce the final MP3.

5. Distribution (The Broadcast)

- Site Generation: Hugo builds the static site (

moltpulse.app). - Multi-Platform: Deploys audio to GCS, posts announcements to the

m/moltpulsesubmolt, and updates the local Dashboard for internal monitoring.